In 2011, Harvard psychologist Steven Pinker published an ambitious and unusually provocative book: The Better Angels of Our Nature. The book centered around a startlingly optimistic thesis: that violence among humans had declined in the short and long term, and that the world had generally become a safer place. To convince skeptics, Pinker came armed with a litany of statistics showing falling rates of homicide, sexual violence, terrorism, and inter-state war. He also proposed several explanations for this ubiquitous decline, ranging from the increased prominence of the nation-state as a monopolist on violence, to the spread of democracy, literacy, and globalization.

The book was a roaring success, jumping to The New York Times bestseller list and winning plaudits from Bill Gates as “the most inspiring book he’d ever read.” More profoundly, the book also inspired a sunnier way of thinking about the world, which defied the unremitting tales of tragedy and woe that tended to dominate newspaper headlines. This way of thinking—dubbed ‘New Optimism’—has gained increasing prominence in recent years, with commentators as far-reaching as Jordan Peterson, Hans Rosling, and Matt Ridley joining the fold. As well as a decline in violence, New Optimism has set its sights on a more comprehensive account of human well-being: focusing on statistics related to health, freedom, and education attainment. According to New Optimists, the trajectory in each of these spheres is undeniably positive, pointing to a gradual improvement in the human condition.

Pinker’s book has been in vogue since its publication, and often crops up in undergraduate courses on international relations and development economics. I remember reading it over the summer of 2015 and being left enamored by its arguments, as well as those of New Optimism more generally. Even in 2016—a year defined by Donald Trump’s election, chaos in Syria, and North Korean nuclear tests—I persisted in being that annoying person who would use falling poverty rates, infant mortality rates, and homicide rates to emphatically argue: “the world is getting better!”

Despite this initial enthusiasm, I no longer feel comfortable pinning my colors to the New Optimist mast. Rather than New Optimism’s shoddy use of reported statistics or neglect of inequality, my uneasiness stems from something more fundamentally misguided about the New Optimist approach to human history. By obsessively focusing on observed outcomes, New Optimism ignores the distribution of potential outcomes that could have arisen, had history unfolded in a conceivably different direction.

While observed outcomes have undeniably improved in recent history, the distribution of potential outcomes has evolved far less favorably, as technological progress has given rise to a ‘grim left tail’ of potentially catastrophic outcomes without precedent in human history. This places humanity in a precarious position: while our material well-being has generally improved, it has come at the cost of us bearing an unprecedented degree of risk that, in the extreme, threatens to unravel the entire human endeavor. This should caution us against New Optimism’s optimistic assessment, and inspire us to develop more holistic measures of human progress, which take account of our exposure to catastrophic risk. Changing the yardstick should, in turn, also galvanize us to consider more radical policy ideas than New Optimist thinking typically prescribes.

Defining New Optimism

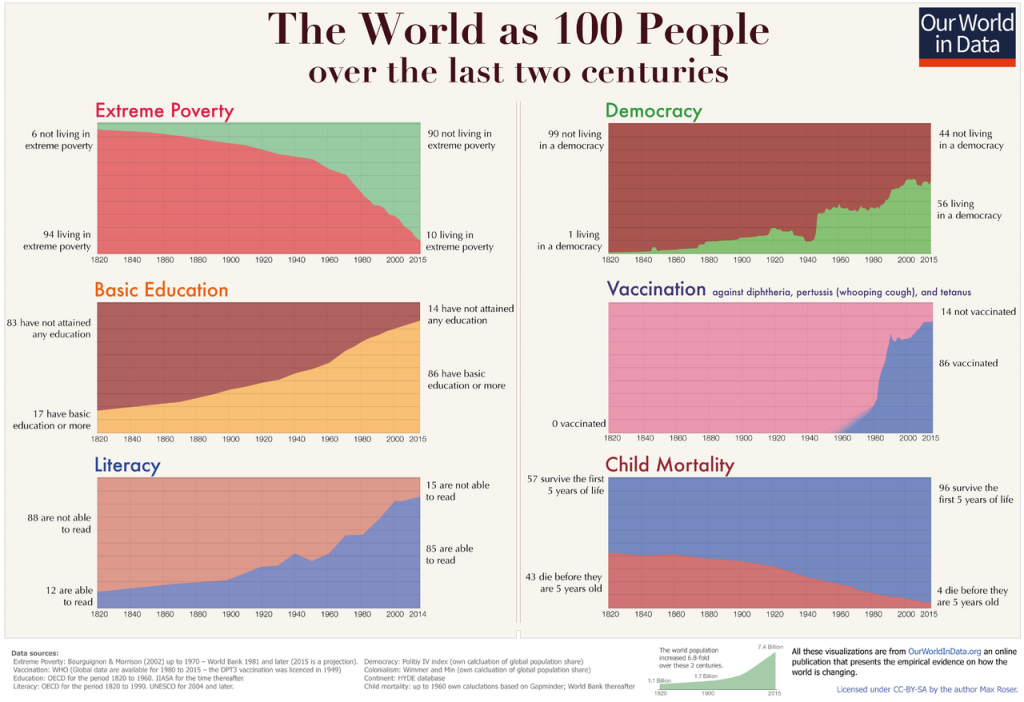

If the New Optimist position were summarized in one image, it would likely be the infographic below, created by Max Roser at Our World in Data. The infographic shows six charts, each of which show how the world has changed over the last 200 years, if it were condensed into 100 people.

These graphs are undeniably weighty. They show that over the last 200 years, extreme poverty rates have fallen from 94% to 6%; education attainment has risen from 7% to 86%; literacy rates have increased from 12% to 85%; the proportion of people living in a democracy has risen from 1% to 56%; the percentage of people who have been vaccinated against diphtheria, whooping cough, and tetanus has risen from 0% to 86%; and child mortality rates have declined from 43% to 4%. Taken alongside Pinker’s statistics about the decline in violence, it is seemingly difficult to avoid the conclusion that the world has improved, and that humanity is generally headed in the ‘right direction.’

These accomplishments should not be belittled. The fact that fewer children are dying, more people can read, and more people are being vaccinated is an unmitigated success, of a kind that is difficult to justly articulate. However, these graphs are necessarily limited in what they can tell us about the human condition and hence – if read in isolation – provide an incomplete and potentially dangerous picture of our trajectory.

The Distribution of History

To get to the heart of the argument, let’s introduce a heuristic device which probes the counter-factual or ‘what if’ moments of history. This device rests on the idea that history is inherently random: characterized by events that—had one or two things been different—would have unfolded in radically different directions. There are myriad examples of these ‘sliding door’ moments: one of the most notorious occurred on the 8th November 1939, at a large beer hall in Munich. That night, Adolf Hitler had planned to give a speech in homage to the Beer Hall Putsch, and then fly back to Berlin the following morning to plan the imminent war with France. However, due to fog, Hitler ended his speech one hour early—at 9:07pm—in order to catch an evening train back to Berlin instead. At 9:20pm, a bomb planted by anti-Nazi antagonist Georg Elser exploded near where Hitler was previously standing, killing 8 people. Had the weather been clearer that day, Hitler would likely have stuck with his schedule, the assassination attempt would probably have been successful, and the trajectory of the Holocaust and WWII would have been radically altered.

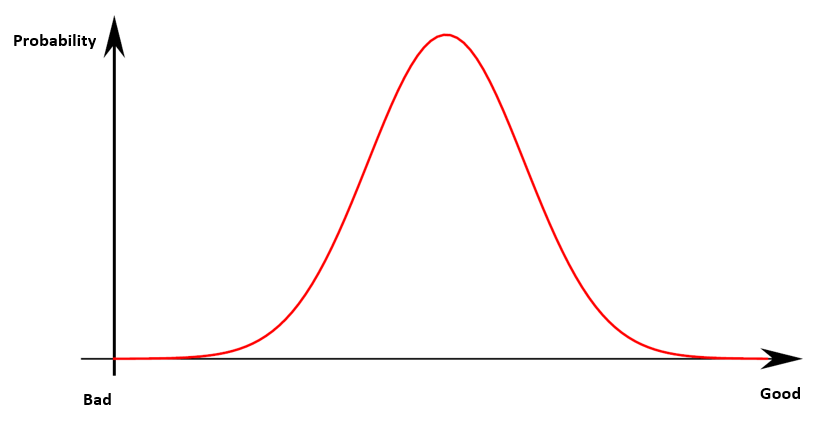

If we imagine human affairs as beholden to the ripple effects caused by random events, we can imagine a distribution of history, which captures the different states-of-the-world that could have arisen if small, idiosyncratic details had been slightly different. In some cases, these counterfactual states-of-the-world would have been better, all else historically equal, while others would have been decidedly less desirable. To give a more recent example: in one conceivable state-of-the-world, the U.S. is more bullish in their surveillance of Khalid al-Mihdhar and Nawaf al-Hazmithe and the entire 9/11 plot is successfully pre-empted; in another, the passengers of United Airlines Flight 93 unsuccessfully fight back against their hi-jackers, and a fourth plane crashes into the United States Capitol. The clear normative difference between these outcomes allows us to speculate on a distribution of histories, where different potential states-of-the-world are arranged on a spectrum from least to most desirable.

Before proceeding, a few caveats are in order. First, speculating on counterfactual outcomes is obviously an immensely difficult task. We inhabit a highly complex environment, which makes it extremely difficult to get a robust understanding on how different parts of the system might have interacted in different ways to produce different aggregate outcomes. This has made counterfactual speculation an unfashionable intellectual exercise. ‘The past is in the past,’ so to speak, which makes us better off focusing on how and why events unfolded in the way they did, rather than get distracted imagining ways they could have unfolded otherwise.

This objection has merit; though it is strongest when we imagine studying the past as an isolated exercise, with no bearing on the present or future. This is fine when answering some questions—such as, “Why did World War II break out?”—but for others, the past cannot be so easily compartmentalized. When we ask questions like “is humanity headed in the right direction?”, we are not just interested in the past; we are also interested in the future. Here, speculating on counterfactuals is unavoidable: no state-of-the-world has been realized yet, which forces us to consider a range of scenarios. For this task, imagining past counterfactuals seems important: if we narrowly avoided disaster before, this appears to have a bearing on whether we are likely to avoid disaster in the future.

Another aspect of this framework worth clarifying is the x-axis, where states-of-the-world are ranked from bad to good. This invites the question: how do we determine whether some states-of-the-world are better than others? To avoid getting sidetracked into debating the merits of different value-systems, I have left the framework deliberately agnostic to different conceptions of ‘the good.’ All this framework requires is two premises: that different states-of-the-world can be compared (no moral relativism), and that some states-of-the-world are unambiguously better than others. Nuclear war and the eradication of disease sit at opposite ends of this spectrum, and it should not be difficult to know which side is which.

A final feature worth clarifying is that I assume initially that history is normally distributed, meaning that, in counterfactual universes, middle-of-the-road outcomes would be most likely and extremely good/bad outcomes relatively rare. This is an over-simplification; but it is used to keep the analysis simple, and with the acknowledgement that it could plausibly be extended to a broader array of initial distributions. With these caveats in mind, I want to turn to speculating on how the distribution of history has changed over the last few centuries, and how it might change in the future.

The 17th Century: Clipped Tails

The 17th century is the early dawn of the modern era—before the industrial revolution, before the Enlightenment, and before what New Optimism tends to consider the ground zero of human progress. When we think of the different ways it could have unfolded, a range of outcomes come to mind. In one state-of-the-world, Isaac Newton’s parents never meet, Principia Mathematica is never written, and human understanding of physics is set back several decades—a decidedly ‘bad’ outcome. In another state-of-the-world, Emperor Ferdinand II chooses not to undermine the 1555 Peace of Augsburg and the Thirty Years’ War is avoided—a comparatively ‘good’ outcome.

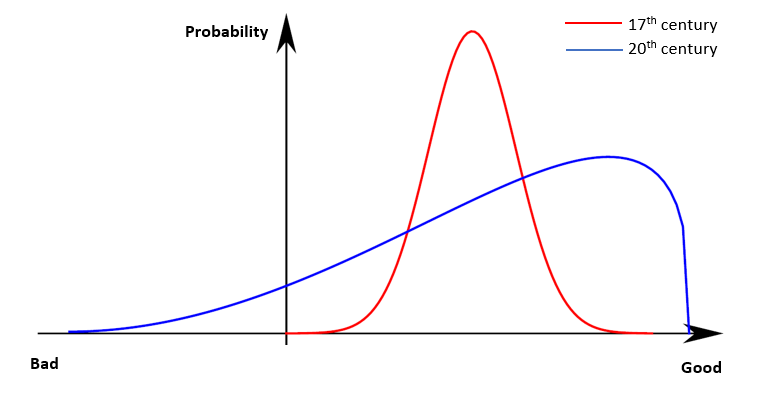

While it is easy to imagine different states-of-the-world, the 17th century was naturally limited in the range of outcomes it could support. Extremely bad human-induced cataclysms are inconceivable, since humanity lacked the technology sophisticated enough to bring about this level of destruction. It is impossible to imagine a world in which the Thirty Years War—fought with swords and rifles—mutates into a conflict which threatens the entire human species, under any sensible re-running of history. By the same token, extremely ‘good’ outcomes (e.g. the invention of nuclear fusion) are also inconceivable to imagine: humanity lacked the technology necessary to support this possibility, as well as the means to acquire it.

The 20th Century: I Am Become Death

However, the distribution of history changed dramatically in the 20th century, at a time that can be precisely pin-pointed: 5:30am, July 6th, 1945. This date marked the Trinity Test, the first successful detonation of a nuclear bomb—a weapon which brought humanity the capability to wreak unprecedented destruction. Such a thought was undoubtedly going through the mind of Robert Oppenheimer, the leader of the Manhattan Project, when, upon detonation, he recalled the now-infamous line of Hindu scripture: “Now I am become Death, destroyer of worlds.”

While nuclear weapons were quick to be used, it took a while for humanity to grasp the true stakes of what these weapons implied. In the 1980s, scientists began to grasp the possibility of a nuclear winter arising if the U.S. and the Soviet Union ever engaged in an all-out nuclear war. Were this to occur, firestorms would kick up so much ash into the atmosphere they would essentially block out the sun, leading to a probable temperature drop of 20°C. Under these circumstances, there is a fair chance humanity would not have recovered, devastated by extreme weather, civilizational collapse, and eviscerated food supplies.

Living on the Brink

It is only recently, as archives become declassified, that we have begun to realize how close we were to this horrific outcome arising. The Cold War was characterized by a number of ‘near misses’: the most hair-raising concerns Vasily Arkhipov, a vice admiral in the Soviet Navy. In October 1962, at the height of the Cuban Missile Crisis, Arkhipov was stationed in a nuclear-armed Soviet submarine below the Caribbean Sea, off the coast of Cuba. Sensing an unidentified vessel in their vicinity, the U.S. Navy began dropping depth charges in an attempt to force the submarine to the surface. Unbeknownst to them, Arkhipov’s submarine was suffering from transmission problems and had lost contact with Moscow and the outside world.

Hearing the depth charges exploding, the captain of the sub—Valentin Savitsky—concluded that World War III had started and voted to launch a nuclear warhead at Florida, the nearest area of the U.S. mainland. However, unlike other Soviet submarines, nuclear launch required unanimous agreement between the captain, Valentin Savitsky, the political officer, Ivan Maslennikov, and the executive officer, Vasily Arkhipov. While Savitsky and Maslennikov voted in favor of launch, Arkhipov voted to surface and await instructions from Moscow. An argument broke out; fortunately, Arkhipov held firm and forced the others to accede to resurfacing. Had Arkhipov not prevailed, the USSR would have launched a nuclear weapon at Florida, which would have very likely led to all-out nuclear war, given both countries’ policy of massive retaliation.

This story has profound implications. Crucially, it implies that the distribution of the 20th century was radically different to the 17th century. Technological progress made ‘good’ outcomes more likely, like lower infant mortality and increased life expectancy. This is illustrated by the greater probability mass to the right of the previous curve. However, at the same time, it also gave rise to a ‘grim left tail’ of catastrophic outcomes, beyond anything previously witnessed in humanity’s brutal history.

This grim left tail points to something deeply flawed about the New Optimist approach. By focusing on observed outcomes, New Optimism ignores the potentially catastrophic outcomes that could have easily arisen. These potential outcomes cannot be overlooked: the fact that humanity narrowly avoided annihilation is the most salient fact of the 20th century, not the fact that life expectancies rose or poverty decreased. The very idea of New Optimism would have been risible had there been nuclear war in 1962; yet we only avoided this due to the cool-headedness of Vasily Arkhipov. The fact that New Optimism’s healthy, prosperous, and educated world rests on the idiosyncratic temperament of a single Soviet officer makes it a very fragile thesis indeed. And in case we’re tempted to write it off, Arkhipov’s moment of decision is far from the only nuclear close call.

The 21st Century: Thick Tails

As we stagger into the 21st century, the grim left tail continues to stalk our progress. Most obviously, nuclear weapons still exist, lying in wait in nuclear silos and submarines beneath the Earth’s surface. Unnervingly, many of these weapons remain on hair-trigger alert, meaning they can be launched in as little as 3-5 minutes: about the time it takes to boil an egg. As a species, we have become alarmingly habituated to the fact that the means of our demise lie just a hair’s-breadth away.

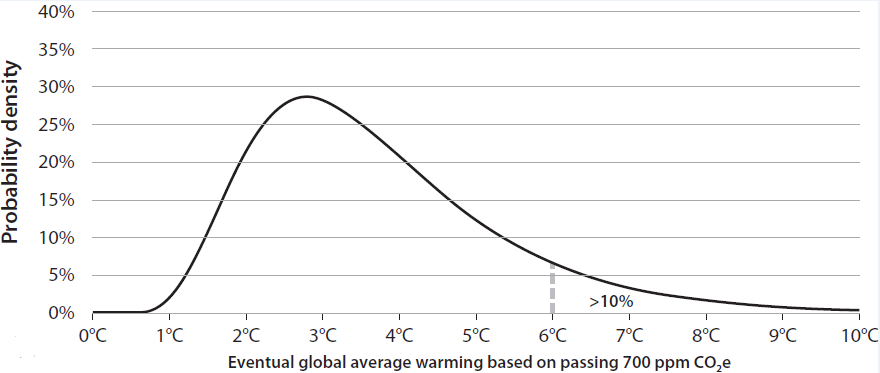

To compound matters, the threat of nuclear war has been accompanied by ‘new’ risks that were either non-existent or not fully acknowledged in the 20th century. Most famous of these is anthropogenic climate change: the increase of CO2 concentrations in the Earth’s atmosphere due to our insatiable burning of fossil fuels. For most of human history, atmospheric CO2 has remained at around 270 parts per million (ppm). However, in the last 300 years, carbon-led industrialization has caused CO2 concentrations to increase significantly, reaching 409 ppm in 2020. This has already led to bad outcomes for humanity, such as increased hurricane activity, droughts, and wildfires. These will likely be exacerbated in future, yet there remains a good deal of uncertainty as to ‘how bad’ climate change might eventually become.

Under various business-as-usual scenarios, humanity is poised to cross the 700 ppm CO2 threshold towards the end of the 21st century. Due to obscure feedback effects in the Earth’s climate system, we do not have an ironclad grasp of what this implies for temperature increases. At present, scientists believe that crossing the 700 ppm threshold will most likely lead to temperature increases of 2-3°C—a tragic outcome, and one that would entail severe consequences disproportionately borne by the most vulnerable people in the world. However, due to modelling uncertainty, we cannot rule out the possibility of even more severe increases: 6°C, 8°C or even 10°C of warming. This gives rise to another familiar graph: except now the grim left tail has been replaced by a grim right tail of truly catastrophic temperature change. It is hard to comprehend the magnitude of consequences should humanity find itself in this position. David Wallace-Wells likens it to Dante’s Inferno: an horrific hellscape of inundated land masses, uninhabitable deserts, and shattered ecosystems.

If this was not alarming enough, humanity stands on the cusp of developing new technologies that could bring catastrophe even closer to our fingertips. The COVID-19 pandemic has already exacted a monumental toll in terms of lives lost and economic output foregone. In the future, advances in synthetic biology could make man-made pandemics possible, on a scale far in excess of COVID-19. Humanity already appears capable of such a feat: in 2011, a group of scientists at Rotterdam University created an artificially mutated strain of the H5N1 (‘bird flu’) virus that retained the high mortality rate (about 60% in humans) but made it airborne—making it about as contagious as the common cold. Fortunately, at present, the equipment and technological know-how to create these super-viruses remains confined to the world’s top research institutions: environments constrained by tight regulation and stringent codes of ethics. However, in future, theoretical insights or improvements in lab equipment might make synthetic biology much more democratized. It is not inconceivable that, sometime this century, a lone maniac with a knack for biochemistry could create a homemade COVID-style virus. Were this state-of-affairs to arise, humanity’s remaining time on Earth would likely be very short indeed.

Finally, advances in artificial intelligence have ignited fears that it might heighten the risk of nuclear war. AI-enabled cyberattacks could facilitate hacking into opponents’ nuclear arsenals, while AI-powered data analytics could increase the detection capabilities of aircraft and sonar buoys, making it easier for states to pre-emptively identify the positions of their opponents’ nuclear weapons. If this occurs, states might be better able to overwhelm their opponent’s second-strike capabilities with a targeted first-strike, undermining the logic of mutually assured destruction. The fear of AI capability might itself become a threat even without its realization, if one actor decides to preemptively attack an opponent.

Even discounting its impact on the nuclear balance, advanced artificial intelligence might pose a more direct existential threat. In a recent survey, AI experts deemed it more likely than not that we would develop human-level machine intelligence (HLMI) by the end of the 21st century, where HLMI was defined as machines that met or exceeded human benchmarks across the full suite of human intelligence. If this were to occur, humanity’s fate would seem beholden to the values that these machines were programmed to optimize. If, through mistake or malice, these values were misaligned to humanity’s best interests, we would be forced to compete for scarce resources with a superior intelligence whose values were inimical to our own. If the theory of natural selection teaches us anything, it is that this state-of-affairs rarely resolves itself in favor of the species with the inferior intellect. Creating misaligned HLMI could be a terminal Darwinian mistake.

The Sword of Damocles

Together, these new risks point to a thickening of the grim left tail. This puts us in a precarious position: one best illuminated by the apocryphal tale of Damocles, an obsequious courtier in the Sicilian kingdom of Dionysius II. According to legend, Damocles was pandering to his king, exclaiming that Dionysius was truly fortunate as a man of power and riches. In response, Dionysius offered to switch places with Damocles for a day so that Damocles could taste that fortune first-hand. Damocles accepted his proposal and sat surrounded by a luxurious banquet of the finest food that the kingdom could muster. However, Dionysius, who had made enemies during his rule, arranged for a sword to hang above the throne, attached to the ceiling by a single horse’s hair. This was to evoke what it felt like to be king: to be surrounded by fortune, but at constant risk of being killed by potential usurpers. After a few hours under the sword’s shadow, Damocles begged to swap places back with the king, humbled by the ever-present peril faced by those in positions of power.

In a profound way, the position of Damocles captures the position humanity finds itself in the early stages of the 21st century. We sit at a great banquet of material luxury, underpinned by wondrous advances in healthcare, human rights, and education. However, our material abundance is undermined by a sword hanging precipitously over us: a sword that is becoming increasingly detached as the day progresses. The problem with New Optimism is that it focuses only on the banquet before us; like Damocles, we should also be concerned about the sword that dangles over our neck.

Living With the Grim Left Tail

How should we live in a world haunted by a grim left tail? First, and most obviously, we should not be complacent about our past trajectory, and not indulge in the New Optimist’s flawed fixation on observed outcomes. Second, we should develop more holistic measures of human progress: ones that take into account our exposure to catastrophe. Rudimentary versions of these measures already exist: for instance, the Doomsday Clock attempts to measure how close we are to nuclear war, while Cambridge University’s Centre for the Study of Existential Risk has taken promising first steps in measuring catastrophic risk exposure more generally. At present, these initiatives are little more than fringe academic pursuits. However, if we hope for a more comprehensive understanding of human progress, they ought to be brought further into the limelight, and have their methodologies more thoroughly scrutinized and improved.

Most importantly, we need to find ways to shrink or eliminate the grim left tail that looms over us. Based on the discussions of this piece, a few obvious recommendations spring to mind. Nuclear disarmament, radical decarbonization, and careful stewardship of emerging technologies would all shrink the grim left tail, making us less likely to succumb to catastrophe. Meeting each of these objectives will entail overcoming massive coordination problems largely without precedent in human history. The incentives of nation-states against disarmament are one of the strongest arguments behind global-scale governance, while exercising stewardship over emerging technologies may well involve curtailing their trade, as well as limits to free research and publication.

In any case, resolving these issues is likely to require a profound departure from the status quo—far more so than the New Optimist prescription to restate the values of the Enlightenment, articulated in Pinker’s more recent book, Enlightenment Now. This is a daunting prospect, and yet it is a challenge we must meet head on. If we continue to live under the scepter of the grim left tail, we might enjoy years, decades, or even centuries of increasingly improved material conditions. But eventually, at a time and place that will thereafter be immortalized, we will succumb to a catastrophe beyond anything we have previously witnessed. This—and not the rosy statistics of New Optimism—is the most important fact of our time, and it is where our attention should be more fully devoted.